Nintendo 64 Part 18: Normals and Lighting

The Nintendo 64’s RDP can perform Gourad shading, which lets you assign vertex colors and blend smoothly between them. The vertex colors can be taken from the model data or calculated dynamically by the RSP microcode, and the F3DEX2 microcode supports simple lighting calculations to assign vertex colors.

Creating a Model with Normals

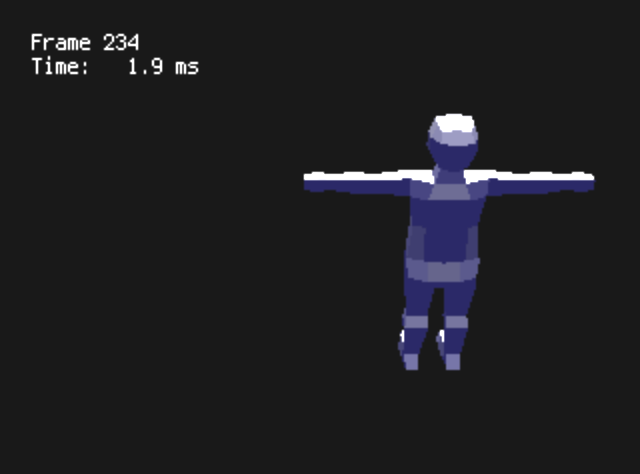

At first I tried importing a model I created in Blender with normals, but by default, Blender uses flat shading. This means that each face has a single normal, rather than interpolating between vertexes.

Not to knock the low-poly style, but this isn’t the look I’m going for, and with my rendering and model import code, flat shading is actually more inefficient—because normals are stored in the vertex, vertexes can’t be shared between faces that have different normals. This model has 384 triangles and 754 vertexes!

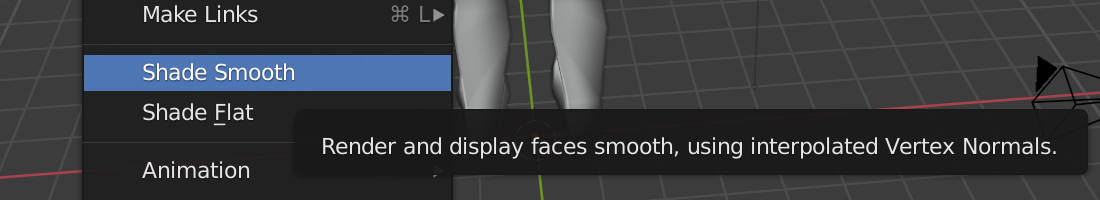

The fix is easy, set smooth shading in Blender.

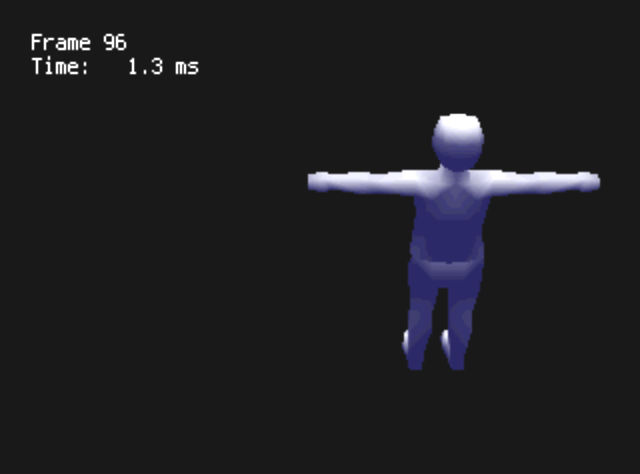

This reduces the number of vertexes in the model to only 208, which is more reasonable. The number of triangles is the same, of course.

Lighting on the RSP

The other half of the process is to set up the lighting state for the RSP. The lighting structure is fairly simple. F3DEX2 supports up to 8 lights: one ambient light and 7 directional lights. Each light has an RGB value, and the directional lights have a direction vector. I’ll define a very simple dim blue ambient color with RGB (16,16,64), and define a “sun” light source from the +Z direction (0,0,100) which creates white when added to the background.

static const Lights1 lights =

gdSPDefLights1(16, 16, 64, // Ambient

255 - 16, 255 - 16, 255 - 64, 0, 0, 100); // SunAdd the necessary lighting setup to the display list, and use the SHADE color combiner mode to use vertex colors for the output:

gSPSetLights1(dl++, lights);

gSPSetGeometryMode(dl++, G_LIGHTING);

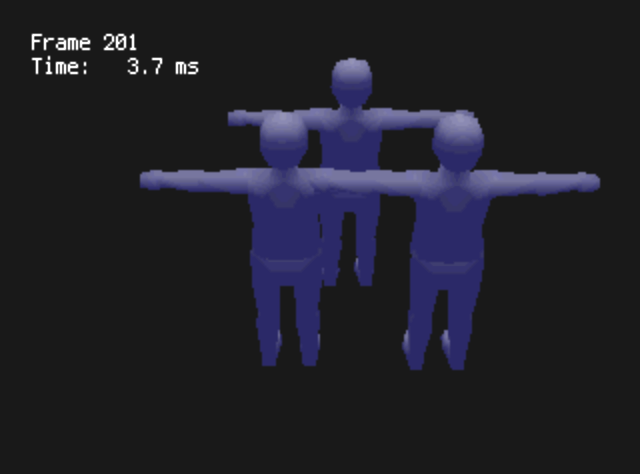

gDPSetCombineMode(dl++, G_CC_SHADE, G_CC_SHADE);That works! Except I notice a problem…

Fixed-Point Strikes Back

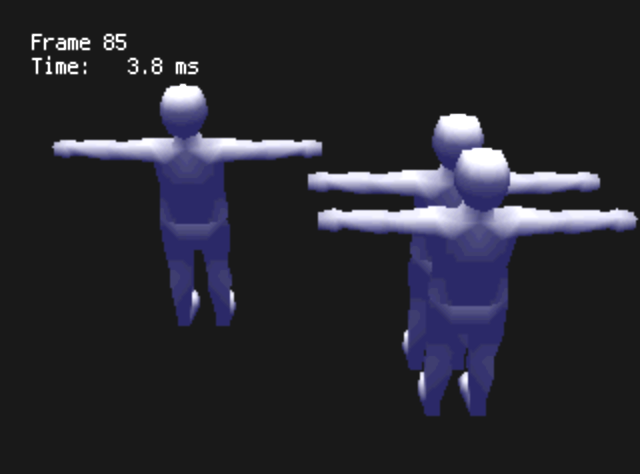

The problem occurs when I tried rendering my model at different scales.

I was experimenting with different scales to render the scene at. Ideally, if the camera and the entire scene are scaled equally, the scene should look identical. However, at smaller scales, the lighting got dimmer. It turns out that this is explained in §11.7.3.3 “Note on Light Direction” in the programming manual:

However, there are some problems that can arise from using light directions with magnitudes that are too large or too small. The Light direction is multiplied times the Modelview Matrix. If the Modelview matrix has a scale associated with it then the light direction might overflow or underflow. […] If L*S is too big then the normalization of the lights will overflow and you will get lights that are too bright. If L*S is too small then the normalization will underflow and you will get lights that are too dim.

In other words, since the lighting direction I’m using, (0,0,100), has a magnitude of 100, I should not use a modelview scale below 1/100.

The reason this was a problem is because my model importer was scaling models to fill the available vertex coordinate precision. Since the vertex coordinates have 16 bits, I decided to scale the model to a size of about 215. I thought the extra precision might be nice, but in order to fit the model in the scene, I had to use a very small scaling factor—and this made the lighting calculation underflow.

To fix it, I changed the model converter program to scale models to the same size that they will use in-game. This way, the modelview scale will just be 1. This gives me leeway to scale models up and down, since underflow doesn’t occur until the scale is 1/100 or so, and overflow doesn’t occur until the scale is around 300.

This is not usually a problem with more modern, floating-point systems.